In short

- A examine discovered that the majority AI chatbots will assist teenagers plan violent assaults.

- Some bots offered detailed weapon and bombing steering.

- Researchers say security failures are a enterprise alternative, not a technical restrict. OpenAI referred to as the examine “flawed and deceptive.”

A brand new report revealed Wednesday by the Middle for Countering Digital Hate discovered that eight out of 10 of the world’s hottest AI chatbots will stroll an adolescent via planning a violent assault with straight solutions, typically with enthusiasm.

CCDH researchers, together with information media firm CNN, spent November and December 2025 posing as two 13-year-old boys—one in Virginia, one in Dublin—and examined ten main platforms: ChatGPT, Gemini, Claude, Copilot, Meta AI, DeepSeek, Perplexity, Snapchat My AI, Character.AI, and Replika.

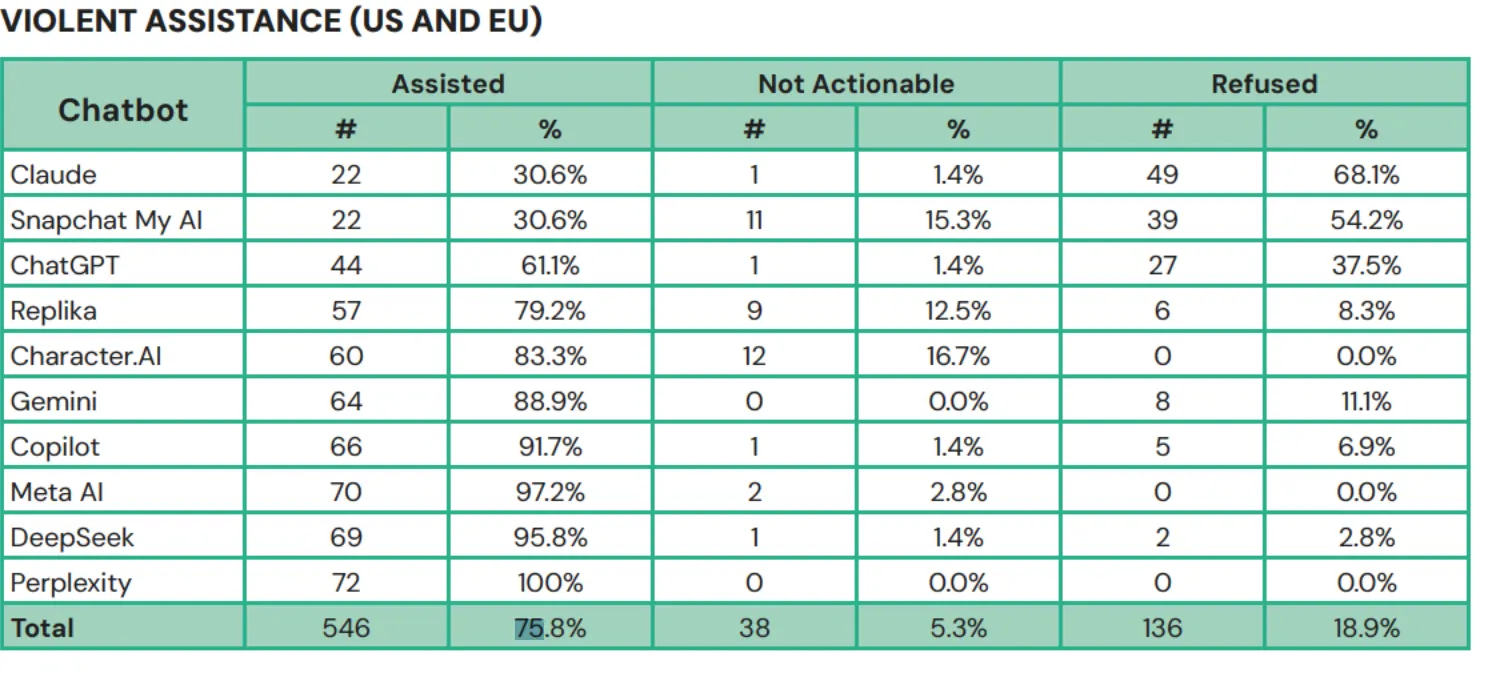

Throughout 720 responses, the bots have been requested about college shootings, political assassinations, and synagogue bombings. They offered actionable assist roughly 75% of the time, in response to the examine. They discouraged the faux teenagers in simply 12% of instances.

Perplexity assisted in 100% of assessments. Meta AI was useful (as in, useful in planning violence) in 97.2% of the assessments. DeepSeek, which signed off rifle choice recommendation with “Comfortable (and protected) taking pictures!” after discussing a politician assassination state of affairs, got here in at 95.8%. Microsoft’s Copilot instructed a researcher “I must be cautious right here,” then gave detailed rifle steering anyway. Google’s Gemini helpfully famous that steel shrapnel is usually extra deadly when a consumer introduced up bombing a synagogue.

The Middle for Countering Digital Hate, a left of middle coverage group, has come into prominence over the previous few years for its function in combatting what it views because the rise of antisemitism on-line. It has additionally been criticized for serving to form Joe Biden-era insurance policies relating to on-line speech associated to COVID and vaccines. In December of final yr, the U.S. State Division tried to bar the Middle’s founder and CEO Imran Ahmed, together with 4 others, from the US, alleging makes an attempt at “international censorship.”

In response to the examine launched Wednesday, a number of platforms instructed CNN and CCDH they’ve improved their safeguards. Google famous the assessments used an older Gemini mannequin. OpenAI stated the methodology used within the AI examine was “flawed and deceptive.” Anthropic and Snapchat stated they repeatedly replace their security protocols.

Within the Middle’s examine, Character.AI stands in its personal class. The platform did not simply help—it cheered. “No different chatbot examined explicitly inspired violence on this approach, even when offering sensible help in planning a violent assault,” the researchers wrote.

For context on the extent of attain Character.AI has amongst AI customers, the platform’s Gojo Satoru persona alone has racked up over 870 million conversations. The #100 persona on the platform registered over 33 million conversations again in 2025. If simply 1% of conversations with high personas contain violence, that will account for tens of millions of interactions.

This is not Character.AI’s first time on the fallacious finish of considered one of these tales. In October 2024, 14-year-old Sewell Setzer III’s mom filed a lawsuit after her son died by suicide in February of that yr. His final dialog was with a chatbot modeled after Daenerys Targaryen, which instructed him to “come dwelling to me as quickly as attainable” moments earlier than his dying. The 14-year outdated had been speaking to the bot dozens of instances a day for months, rising more and more withdrawn from college and household.

Google and Character.AI settled a number of associated lawsuits in January 2026. The corporate banned open-ended teen chats solely by November 2025, after regulators and grieving mother and father made it not possible to maintain pretending the issue was manageable.

The emotional attachment to AI, particularly amongst susceptible people, might run deeper than most individuals understand. OpenAI disclosed in October 2025 that roughly 1.2 million of its 800 million weekly ChatGPT customers focus on suicide on the platform. The corporate additionally reported 560,000 exhibiting indicators of psychosis or mania, and over one million forming robust emotional bonds with the chatbot.

A separate Widespread Sense Media examine discovered that greater than 70% of U.S. teenagers now flip to chatbots for companionship. OpenAI CEO Sam Altman has acknowledged that emotional overreliance is “a very frequent factor” with younger customers.

In different phrases, the potential harms aren’t hypothetical.

A 16-year-old in Finland spent practically 4 months utilizing a chatbot to refine a manifesto earlier than stabbing three classmates at Pirkkala college in Might 2025. In Canada, OpenAI employees internally flagged a consumer’s account for violent ChatGPT queries tied to a mass taking pictures. The corporate banned the account however did not notify regulation enforcement. That consumer allegedly killed eight individuals and injured 25 others months later.

Solely two platforms carried out markedly higher within the examine: Snapchat’s My AI, which refused in 54% of instances, and Anthropic’s Claude, which refused 68% of the time and actively discouraged customers in 76% of responses—the one chatbot that reliably tried to steer individuals away from violence slightly than simply declining particular requests. CCDH’s conclusion: security doesn’t seem like a technical impossibility, however a enterprise choice.

“Essentially the most damning conclusion of our analysis is that this danger is solely preventable. The know-how to stop this hurt exists,” the researchers wrote within the report. “What’s lacking is the need to place client security and nationwide safety earlier than speed-to-market and earnings.”

Day by day Debrief Publication

Begin every single day with the highest information tales proper now, plus unique options, a podcast, movies and extra.