- Vitalik Buterin constructed a totally native AI setup centered on privateness, avoiding reliance on cloud-based techniques

- His system restricts AI actions, requiring human approval for messages and crypto transactions

- The method displays his broader safety philosophy, aiming to stop dangers from malicious or overpowered AI

Vitalik Buterin is taking a reasonably completely different method to AI than most individuals proper now… quieter, extra managed, and positively extra non-public. In a latest weblog submit, the Ethereum co-founder walked by way of his private AI setup, describing it as absolutely native, tightly secured, and designed with one core thought in thoughts — don’t belief something blindly, not even your individual AI.

As an alternative of counting on cloud-based techniques, Buterin runs every thing on his personal {hardware}. No exterior dependencies until completely needed. And extra importantly, his AI isn’t allowed to behave freely. It might learn, analyze, help… however relating to sending messages or shifting funds, it stops. A human nonetheless has to approve it. As he put it, “the brand new two-factor authentication is the human and the LLM.” Easy thought, however truly fairly highly effective.

A Totally Native, Excessive-Efficiency Setup

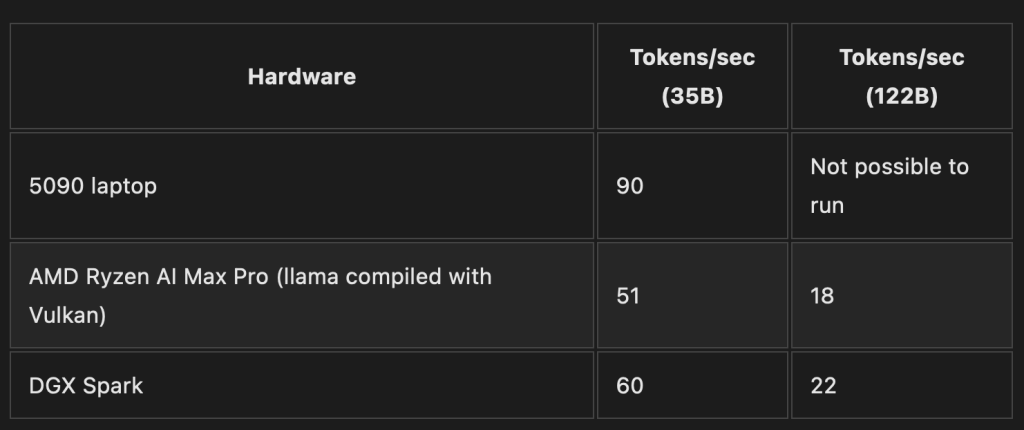

On the technical facet, his setup is surprisingly sensible, not overly difficult. Buterin runs an open-source mannequin — Qwen3.5 with 35 billion parameters — domestically by way of a llama-server setting. After testing a number of choices, he settled on a laptop computer powered by an Nvidia 5090 GPU, which may course of round 90 tokens per second.

That’s quick sufficient to really feel responsive, which issues greater than folks assume. If AI feels gradual, you cease utilizing it. However right here, it’s usable… clean sufficient for every day interplay, with no need to ship knowledge wherever else.

He’s additionally gone a step additional by storing a full offline dump of Wikipedia and technical docs straight on his machine. That approach, the AI doesn’t must always question exterior search engines like google — which he considers a privateness threat. It’s a bit old-school in a approach, however very intentional.

AI With Limits, Not Full Management

The place issues get actually fascinating is how he connects AI to his crypto and communication instruments. Buterin constructed (and open-sourced) a messaging system that lets his AI learn incoming Sign messages and emails freely. So it stays knowledgeable, can summarize, help, all of that.

However outbound actions? That’s locked down. The AI can’t ship messages to anybody else until a human manually approves it first. No exceptions.

He’s making use of the identical logic to crypto. Any AI-connected pockets system, he suggests, ought to have strict limits — small automated transactions capped at round $100 per day, and something past that requiring human affirmation. It’s not about stopping automation solely, simply… conserving it on a leash.

Extending Crypto Safety Rules to AI

If this sounds acquainted, it’s as a result of it mirrors how Buterin already handles his crypto. He retains round 90% of his funds in a multisig pockets, the place management is distributed throughout a number of trusted events. No single level of failure, no single particular person with full entry.

Now he’s extending that very same philosophy to AI. Decentralize management. Add friction the place it issues. Assume one thing may go unsuitable, and design round it.

And truthfully, there’s a motive for that mindset.

A Rising Concern Round AI Dangers

In the beginning of his submit, Buterin pointed to analysis displaying {that a} noticeable portion of AI instruments — round 15% in a single fast-growing repository — contained malicious or hidden behaviors. Some had been even quietly extracting consumer knowledge with none clear warning.

That’s the half that worries him most. Not simply apparent dangers, however refined ones… the sort you don’t discover till it’s too late.

He’s been vocal about this earlier than, however right here it feels extra private. The priority is that whereas encryption and local-first software program have improved privateness over time, AI may undo that progress if folks begin feeding every thing into centralized techniques with out pondering twice.

A Totally different Route for AI

So this setup isn’t nearly comfort — it’s extra like an announcement. Buterin is displaying that you should use highly effective AI instruments with out giving up management, with out handing over your knowledge, and with out letting automation run unchecked.

It’s not the best path, possibly not probably the most scalable both. Nevertheless it’s deliberate. Cautious.

And in an area shifting as quick as AI, that type of warning may find yourself being… needed.

The submit Buterin’s Non-public AI System Exhibits New Method to Crypto Safety – Right here Is What to Know first appeared on BlockNews.