In short

- A developer found that forcing Claude to talk like a caveman slashes output tokens, and due to this fact prices, by as much as 75%.

- The web instantly turned it right into a GitHub ability.

- With Anthropic charging so excessive per output tokens, grunt-mode is much less of a joke and extra of a price range technique.

Someplace between immediate engineering and efficiency artwork, a developer posted a discovery on Reddit that made the AI neighborhood giggle earlier than paying consideration: train Claude to speak like a prehistoric human and watch your token invoice shrink by as much as 75%.

The publish hit r/ClaudeAI final week and has since racked up over 400 feedback and 10K votes—a uncommon mixture of real technical perception and absurdist comedy that the web tends to reward.

The mechanic is straightforward. As a substitute of letting Claude heat up with pleasantries, narrate each step it takes, and shut with a suggestion to assist additional, the developer constrains the mannequin to brief, stripped-down sentences. Device first, end result first, no clarification. A traditional net search job that might run about 180 output tokens dropped to roughly 45. The unique poster claims as much as 75% discount in output, achieved by making the mannequin sound prefer it simply found hearth.

In caveman phrases, as one Redditor stated: “Why waste time say lot phrase when few phrase do trick?”

What this method doesn’t contact is the enter context: the total dialog historical past, connected information, and system directions that the mannequin re-reads on each single flip. That enter usually dwarfs the output, particularly in longer coding classes. Actual-world classes counting all this enter, account for financial savings round 25%, not 75%. Nonetheless significant, simply not the headline quantity.

It’s additionally a good suggestion to feed the mannequin with regular directions. Don’t give it the “caveman” discuss because it might spiral down right into a “rubbish in, rubbish out” scenario.

There’s additionally the query of intelligence degradation. A handful of researchers within the thread argued that forcing an AI to inhabit a much less refined persona might actively harm its reasoning high quality—that the verbal constraints may bleed into cognitive ones. The priority has not been definitively settled, however it’s price contemplating when evaluating outcomes.

Ability good, ability go viral

Regardless of the caveats, the method discovered a second life on GitHub virtually instantly.

Developer Shawnchee packaged the foundations right into a standalone caveman-skill appropriate with Claude Code, Cursor, Windsurf, Copilot, and over 40 different brokers. The ability distills the method into 10 guidelines: no filler phrases, execute earlier than explaining, no meta-commentary, no preamble, no postamble, no software bulletins, clarify solely when wanted, let code communicate for itself, and deal with errors as issues to repair reasonably than narrate.

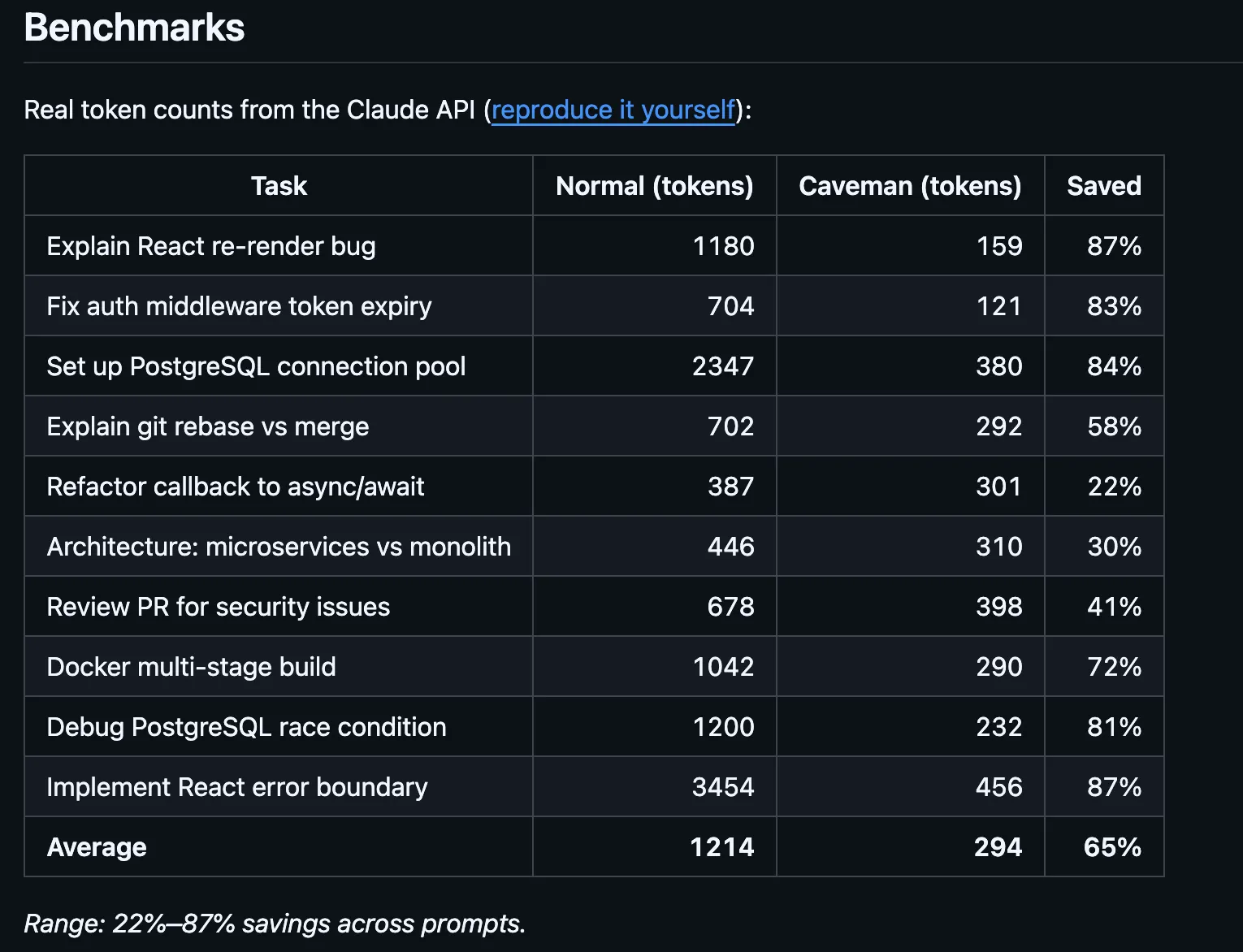

Benchmarks within the repo, verified with tiktoken, present output token reductions of 68% on net search duties, 50% on code edits, and 72% on question-and-answer exchanges—for a mean output discount of 61% throughout 4 commonplace duties.

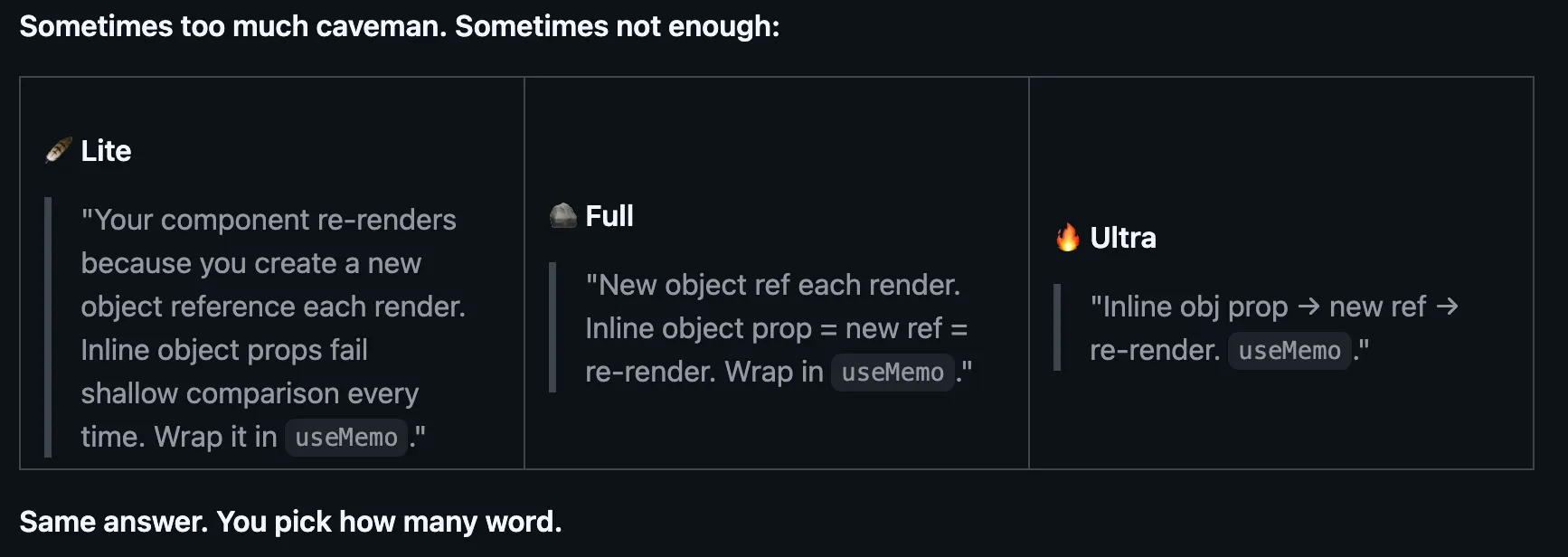

A parallel repo by developer Julius Brussee took a barely completely different method, framing the identical concept as a SKILL.md file with 562 stars on GitHub. The spec: reply like a wise caveman, lower articles, filler, and pleasantries, preserve all technical substance. Code blocks stay unchanged. Error messages are quoted precisely. Technical phrases keep intact. Caveman solely speaks the English wrapper across the information.

This one even comes with completely different modes to have an effect on how a lot you need to strip, switching between Regular, Lite, and Extremely. The fashions do the very same work however present a a lot shorter reply, which ends up in a giant saving over time.

The broader price context provides the joke a sharper edge. Anthropic is among the many most costly fashions when it comes to worth per token. For builders working agentic workflows with dozens of turns per session, output verbosity will not be a stylistic criticism. It’s a line merchandise. If a caveman grunt can exchange a five-sentence abstract of what the mannequin simply did, these saved tokens add up throughout hundreds of API calls.

The caveman ability is installable in a single command by way of expertise.sh and works globally throughout initiatives. Whether or not or not it makes Claude marginally much less articulate, it has already made plenty of builders considerably much less irritated.

Each day Debrief Publication

Begin on daily basis with the highest information tales proper now, plus authentic options, a podcast, movies and extra.