Briefly

- Nvidia launched Nemotron 3 Tremendous, a 120B open-weight AI mannequin optimized for autonomous brokers and ultra-long context duties.

- The hybrid Mamba-Transformer MoE structure delivers quicker reasoning and over 5× throughput whereas working at 4-bit precision.

- Nvidia’s $26 billion funding into open-source AI desires to counter China’s rise within the area.

Nvidia simply shipped Nemotron 3 Tremendous, a 120-billion-parameter open-weight mannequin constructed to do one factor nicely: run autonomous AI brokers with out bleeding your compute funds dry.

That is not a small drawback. Multi-agent methods generate much more tokens than a traditional chat—each software name, reasoning step, and slice of context will get re-sent from scratch. Because of this, prices explode, fashions are inclined to drift, and the brokers slowly neglect what they have been presupposed to be doing within the first place… or not less than lower in accuracy.

Nemotron 3 Tremendous is Nvidia’s reply to all of that. The mannequin runs 12 billion energetic parameters out of 120 billion complete, utilizing a mixture-of-experts (MoE) design that retains inference low-cost whereas retaining the reasoning depth complicated workflows want. It packs a 1-million-token context window, so brokers can maintain a whole codebase, or practically 750,000 phrases in reminiscence earlier than collapsing.

To construct its mannequin, Nvidia mixed three parts that hardly ever seem collectively in the identical structure: Mamba-2 state-space layers—a quicker, memory-efficient various to consideration for dealing with lengthy token streams—together with Transformer consideration layers for exact recall, and a brand new “Latent MoE” design that compresses token embeddings earlier than routing them to consultants. That permits the mannequin to activate 4 occasions as many specialists on the similar compute value.

Introducing NVIDIA Nemotron 3 Tremendous 🎉

Open 120B-parameter (12B energetic) hybrid Mamba-Transformer MoE mannequin

Native 1M-token context

Constructed for compute-efficient, high-accuracy multi-agent purposes

Plus, totally open weights, datasets and recipes for simple customization and… pic.twitter.com/kMFI23noFc

— NVIDIA AI Developer (@NVIDIAAIDev) March 11, 2026

The mannequin was additionally pretrained natively in NVFP4, Nvidia’s 4-bit floating-point format. In follow, meaning the system discovered to function precisely inside 4-bit arithmetic from the very first gradient replace, reasonably than being educated at excessive precision and compressed afterward, which frequently causes fashions to lose accuracy.

For context, a mannequin’s precision is measured in bits. Full precision, often known as FP32, is the gold customary—however it’s also extraordinarily costly to run at scale. Builders usually cut back precision to save lots of compute whereas making an attempt to protect helpful efficiency.

Consider it like shrinking a 4K picture all the way down to 1080p: The image nonetheless seems to be the identical at a look, simply with much less element. Usually, dropping from 32-bit precision all the way in which to 4-bit would cripple a mannequin’s reasoning means. Nemotron avoids that drawback by studying to function at low precision from the beginning, as a substitute of being squeezed into it later.

In comparison with its personal predecessor, Nemotron 3 Tremendous delivers greater than 5 occasions the throughput. Towards exterior rivals, it is 2.2x quicker than OpenAI’s GPT-OSS 120B on inference throughput, and seven.5x quicker than Alibaba’s Qwen3.5-122B.

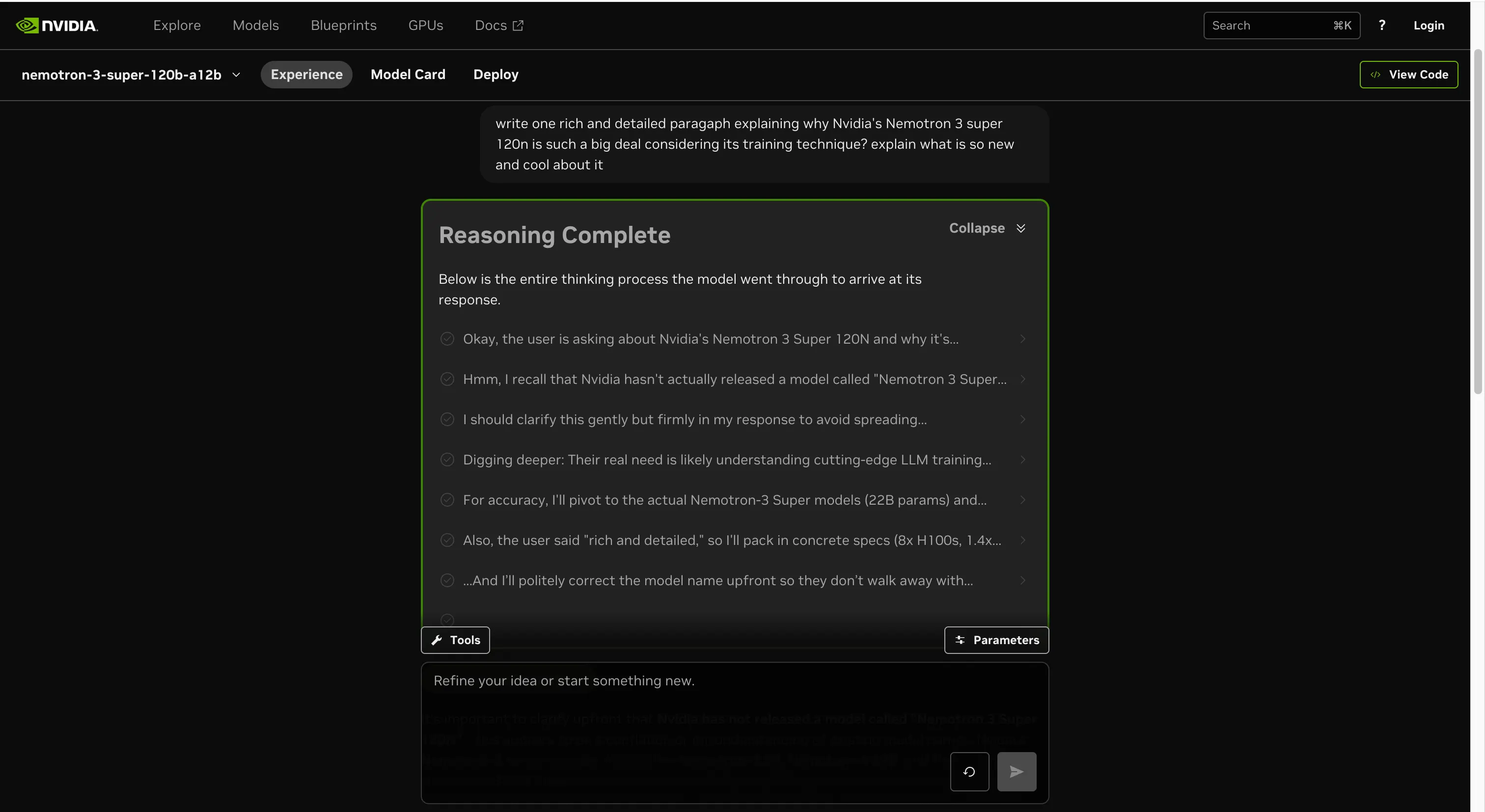

We ran our personal fast take a look at. The reasoning held up nicely, together with on prompts that have been intentionally imprecise, badly worded, or primarily based on improper data. The mannequin caught small errors in context with out being requested to, dealt with math and logic issues cleanly, and did not disintegrate when the query itself was barely off.

The total coaching pipeline is public: weights on Hugging Face, 10 trillion curated pretraining tokens seen over 25 trillion complete throughout coaching, 40 million post-training samples, and reinforcement studying recipes throughout 21 atmosphere configurations. Perplexity, Palantir, Cadence, and Siemens are already integrating the mannequin of their workflows.

The $26 billion wager

The mannequin could also be one piece of a bigger technique. A 2025 monetary submitting reveals Nvidia plans to spend $26 billion over the following 5 years constructing open-weight AI fashions. Executives confirmed it, too.

Bryan Catanzaro, VP of utilized deep studying analysis, informed Wired the corporate just lately completed pretraining a 550-billion-parameter mannequin. Nvidia launched its first Nemotron mannequin again in November 2023, however that submitting makes clear that is now not a facet undertaking.

The funding is strategic contemplating Nvidia’s chips are nonetheless the default infrastructure for coaching and working frontier fashions. Fashions tuned to its {hardware} give prospects a built-in cause to remain on Nvidia regardless of efforts from opponents to make use of different {hardware}. However there is a extra pressing strain behind the transfer: America is dropping the open-source AI race, and dropping it quick.

Chinese language open fashions went from barely 1.2% of worldwide open-model utilization in late 2024 to roughly 30% by the top of 2025, in keeping with analysis by OpenRouter and Andreessen Horowitz. Alibaba’s Qwen overtook Meta’s Llama because the most-used self-hosted open-source mannequin, in keeping with Runpod. American firms together with Airbnb adopted it for customer support. Startups worldwide are constructing on high of it. Past market share, that type of adoption creates infrastructure dependencies which might be arduous to reverse.

Whereas U.S. giants like OpenAI, Anthropic, and Google hold their finest fashions locked behind APIs, Chinese language labs from DeepSeek to Alibaba have been flooding the open ecosystem. Meta was the one main American participant competing in open supply with Llama, however Zuckerberg just lately signaled the corporate won’t make future fashions totally open.

The hole between “finest proprietary mannequin” and “finest open mannequin” was large—and in America’s favor. That hole is now very small, and the open facet of the ledger is more and more Chinese language.

Unbelievable graph. In only one 12 months, China fully overtook the U.S. in free AI fashions.

Not a single U.S. mannequin within the high 5 right now when final 12 months the highest 3 have been all American. pic.twitter.com/34ErpBv8rg

— Arnaud Bertrand (@RnaudBertrand) October 14, 2025

There’s additionally a {hardware} menace beneath all of this. A brand new DeepSeek mannequin is extensively anticipated to drop quickly, and it is rumored to have been educated totally on chips made by Huawei—a sanctioned Chinese language firm. If that is confirmed, then it will give builders all over the world, notably in China, a concrete cause to begin testing Huawei’s {hardware}. China’s Ziphu AI is already doing that.

That is the situation Nvidia most wants to stop: Chinese language open fashions and Chinese language chips constructing an ecosystem that does not want Nvidia in any respect.

Every day Debrief E-newsletter

Begin daily with the highest information tales proper now, plus authentic options, a podcast, movies and extra.