Briefly

- AI engineer Kyle Hessling merged two of Jackrong’s Claude Opus 4.6 and GLM-5.1 distilled finetunes right into a single “frankenmerge.”

- A post-merge “heal fine-tune” was required to repair garbled code output attributable to the layer boundary between the 2 independently-trained fashions.

- The mannequin over-reasons on some duties, nevertheless it’s a solvable drawback.

You thought Qwopus was cool as a result of it merged Qwen and Opus? Effectively, Kyle Hessling, an AI engineer with a number of data and free time simply took that recipe and threw GLM—probably the greatest reasoning fashions on the market—into the combo. The result’s an 18 billion parameter frankenmerge that matches on an affordable GPU and outperforms Alibaba’s latest 35B mannequin.

For individuals who do not know, parameters are the numerical values baked right into a neural community throughout coaching, like dials {that a} neural community can alter — the extra of them, the extra data and complexity the mannequin can deal with, and the extra reminiscence it must run.

Hessling, an AI infrastructure engineer, stacked two of Jackrong’s Qwen3.5 finetunes on high of one another: layers 0 by means of 31 from Qwopus 3.5-9B-v3.5, which distills Claude 4.6 Opus’s reasoning model into Qwen as a base mannequin, and layers 32 by means of 63 from Qwen 3.5-9B-GLM5.1-Distill-v1, skilled on reasoning knowledge from z.AI’s GLM-5.1 instructor mannequin on high of the identical Qwen base.

The speculation: Give the mannequin Opus-style structured planning within the first half of the reasoning and GLM’s drawback decomposition scaffold within the second—64 layers complete, in a single mannequin.

The method is known as a passthrough frankenmerge—no mixing, no averaging of weights, simply uncooked layer stacking. Hessling needed to write his personal merge script from scratch as a result of present instruments do not help Qwen 3.5’s hybrid linear/full consideration structure. The ensuing mannequin handed 40 out of 44 functionality exams, beating Alibaba’s Qwen 3.6-35B-A3B MoE—which requires 22 GB of VRAM—whereas operating on simply 9.2 GB in Q4_K_M quantization.

An NVIDIA RTX 3060 handles it high quality… theoretically.

Hessling explains that making this mannequin wasn’t straightforward. The uncooked merge used to throw garbled code. Besides, the check fashions he printed went form of viral amongst fanatics.

Hessling’s remaining repair was a “heal fine-tune”—principally a QLoRA (a little bit of code that’s embedded into the mannequin like an appendix and closely situations the ultimate output) focusing on all consideration and projections.

We tried it, and though the concept of getting Qwen, Claude Opus, and GLM 5.1 operating regionally in our potato is past tempting, in actuality we discovered that the mannequin is so good at reasoning by means of issues that it finally ends up overthinking.

When examined it on an M1 MacBook operating an MLX quantized model (a mannequin optimized to run on Macs). When prompted to generate our standard check sport, the reasoning chain ran so lengthy it hit the token restrict and gave us a pleasant lengthy piece of reasoning with no working lead to a zero shot interplay. That is a daily-use blocker for anybody desirous to run this regionally on shopper {hardware} for any severe software.

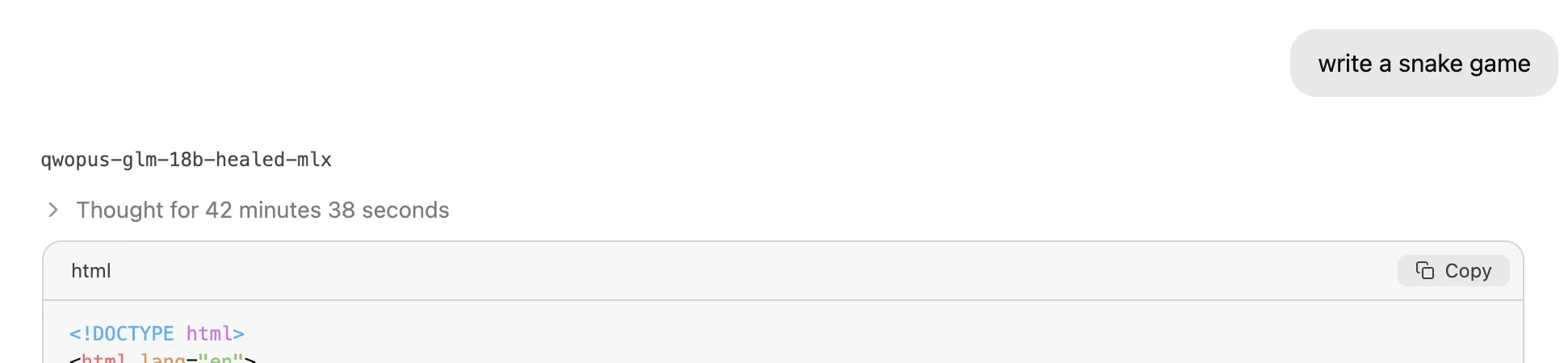

We went a bit softer and issues nonetheless had been difficult. A easy “write a Snake sport” immediate took over 40 minutes in reasoning… plenty of it.

You may see the leads to our Github repository.

This can be a recognized rigidity within the Qwopus lineage: Jackrong’s v2 finetunes had been constructed to deal with Qwen 3.5’s tendency towards repetitive inside loops and “suppose extra economically.” Stacking 64 layers of two reasoning distills seems to amplify that habits on sure prompts.

That is a solvable drawback, and the open-source neighborhood will probably resolve it. What issues right here is the broader sample: a pseudonymous developer publishes specialised finetunes with full coaching guides, one other fanatic stacks them with a customized script, runs 1,000 therapeutic steps, and lands a mannequin that outperforms a 35 billion parameter launch from one of many world’s largest AI labs. The entire thing matches in a small file.

That is what makes open-source price watching—not simply the large labs releasing weights, however the layer-by-layer options, the specialization occurring beneath the radar. The hole between a weekend venture and a frontier deployment is narrower the extra builders be part of the neighborhood.

Jackrong has since mirrored Hessling’s repository, and the mannequin had gathered over three thousand downloads inside its first two weeks of availability.

Day by day Debrief E-newsletter

Begin daily with the highest information tales proper now, plus authentic options, a podcast, movies and extra.