Briefly

- Hy3 preview is a 295 billion parameter Combination-of-Consultants mannequin with solely 21 billion lively parameters, making it cheaper to run than most rivals of comparable functionality.

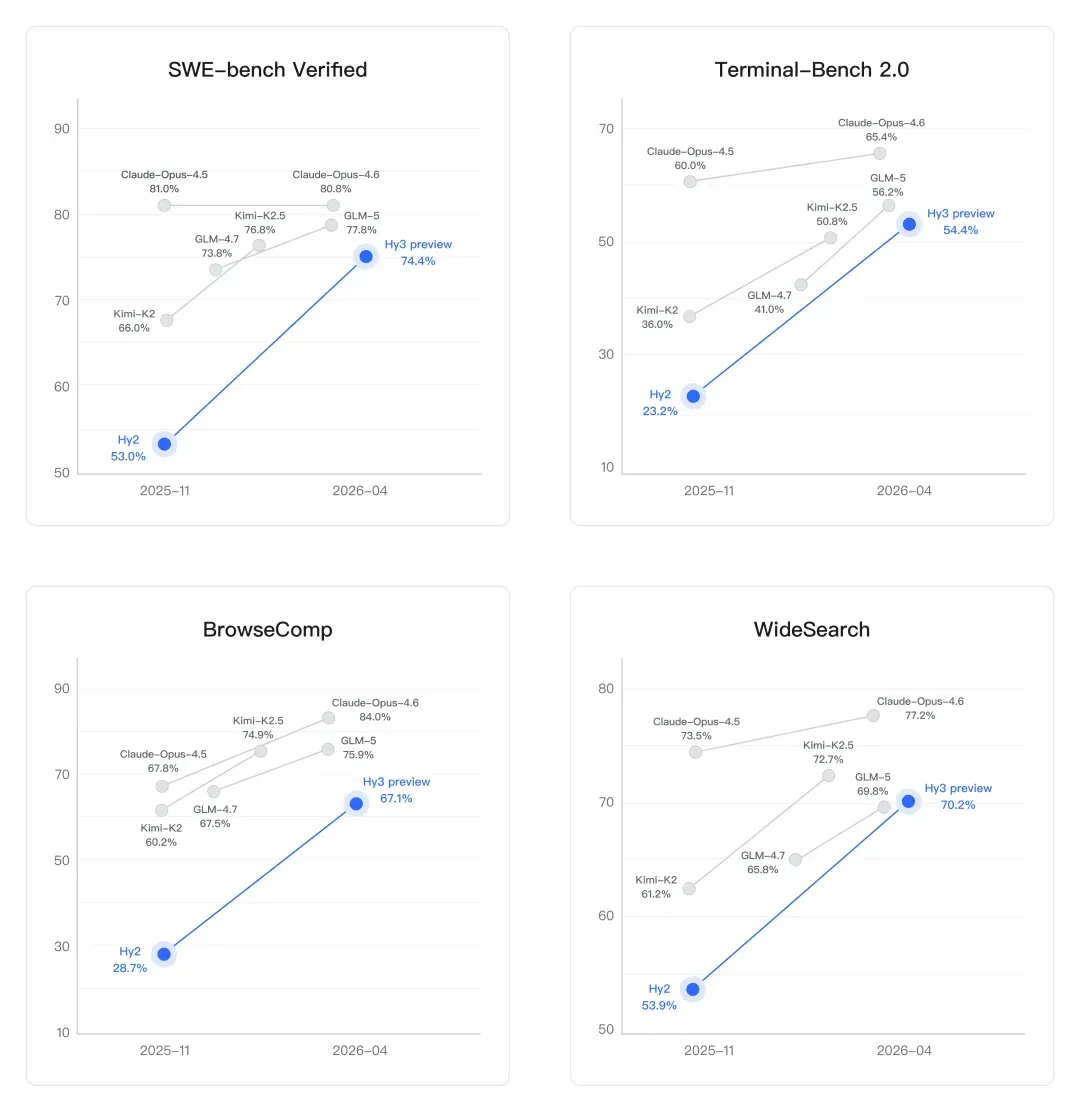

- On SWE-bench Verified—a coding benchmark testing actual GitHub bug fixes—it jumped from 53% (Hy2) to 74.4%, a 40% enchancment over the earlier era.

- The mannequin is already dwell throughout Tencent’s app ecosystem together with Yuanbao, QQ, and Tencent Docs, with API entry on Tencent Cloud beginning at roughly $0.18 per million enter tokens.

Tencent quietly dropped its most succesful AI mannequin but on Thursday, and the benchmark numbers are arduous to disregard. Hy3 preview, the corporate’s first mannequin after a full infrastructure rebuild, went open-source at the moment throughout GitHub, Hugging Face, and ModelScope.

It’s additionally out there on Tencent Cloud’s official web site, below a paid plan.

My3 packs 295 billion whole parameters (a measurement of a mannequin’s potential breadth of data) however solely 21 billion lively at any given time. That is the fantastic thing about a Combination-of-Consultants structure—the mannequin routes every question to a specialised subset of its “skilled” sub-networks as a substitute of working the whole lot without delay. Much less compute, decrease value, roughly related output high quality. It additionally helps as much as 256,000 tokens of context, which is sufficient to swallow a full-length novel in a single immediate.

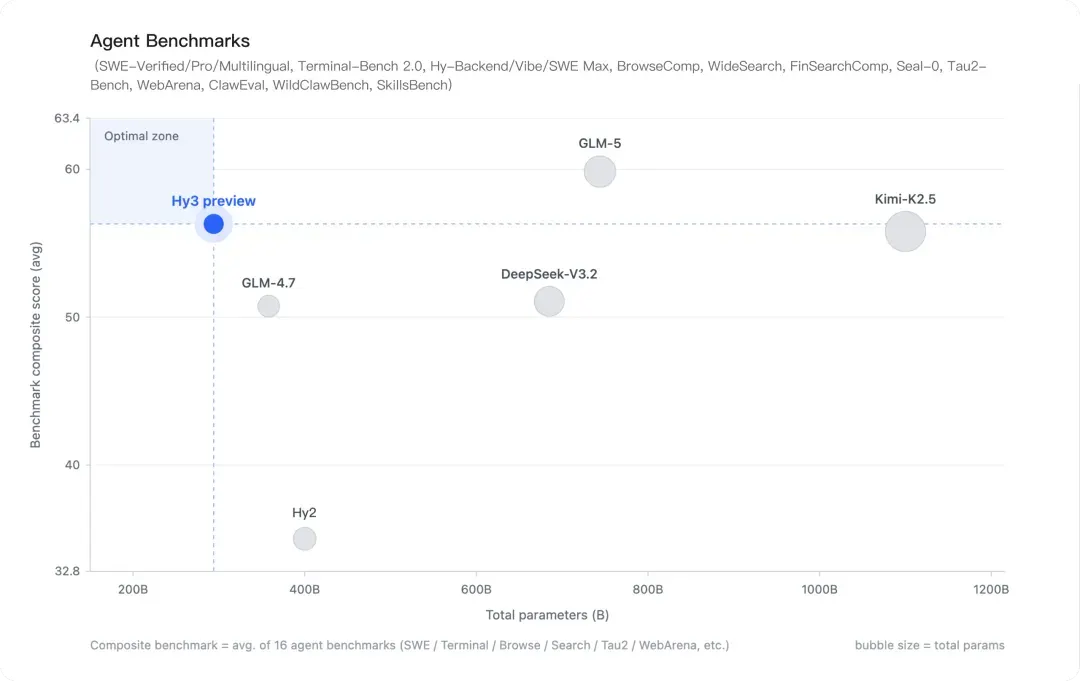

The mannequin was constructed to steadiness three issues Tencent says it stopped sacrificing for one another: functionality breadth, sincere analysis, and cost-efficiency. Their earlier flagship, Hy2, had over 400 billion parameters. Tencent explicitly walked that again, arguing 295 billion is the optimum candy spot the place reasoning totally matures however the price of including extra parameters stops paying off.

This additionally doesn’t imply the mannequin is worse. Fashions with higher coaching and decrease parameters outperform greater generalist ones fairly incessantly.

On coding, the development is dramatic. SWE-bench Verified is a benchmark that checks whether or not a mannequin can truly repair actual bugs from GitHub repositories—not toy issues, however manufacturing code. Hy2 scored 53.0%. Hy3 preview scores 74.4%. That is a 40% leap in a single era, touchdown it in vary of Claude Opus 4.6 (80.8%) and above GLM-5 (77.8%) and Kimi-K2.5 (76.8%). Terminal-Bench 2.0, which measures autonomous job execution in an actual command-line setting, went from 23.2% to 54.4%—additionally an enormous leap.

The mannequin, nevertheless, generally is a very fascinating selection for folks constructing with brokers. Brokers have a really advanced set of directions that contain recollections, abilities, and power calls. They normally miss one thing, which may destroy a workflow or produce poor outcomes. That’s why agentic capabilities have gotten an increasing number of necessary for AI builders as this space turns into essentially the most hyped factor within the trade. It’s additionally why the mannequin was instantly made out there on Openclaw.

Search and searching brokers—the place fashions should retrieve, filter, and synthesize data from the open net with out human steerage—additionally improved sharply. On BrowseComp, a benchmark monitoring advanced net analysis duties, Hy3 preview reached 67.1% (up from Hy2’s 28.7%). On WideSearch, it hit 70.2%, outperforming GLM-5 and Kimi-K2.5 however trailing Claude Opus 4.6’s 77.2%.

In reasoning, the mannequin topped each Chinese language competitor on Tsinghua College’s math PhD qualifying examination (Spring 2026), scoring 88.4 on the common of three runs avg@3. That is a real-world examination, not a curated dataset—the sort of analysis Tencent says it is prioritizing to keep away from benchmark gaming. The mannequin additionally scored 87.8 on CHSBO 2025 (China’s nationwide highschool biology olympiad), highest amongst Chinese language fashions in that class.

Hy3 preview began coaching in late January 2026 and launched Thursday—below three months from chilly begin to open-source launch. Unusually quick for a frontier-class mannequin. Tencent attributes it to a February infrastructure overhaul led by Yao Shunyu, its chief AI scientist, who pushed a full rebuild of the pretraining and reinforcement studying stack.

It is a very totally different strategy from what Chinese language AI labs have been doing a yr in the past, when DeepSeek’s R1 shocked the trade with its cost-efficiency.

Hy3 nonetheless trails OpenAI and Google DeepMind’s flagships, however by the size-to-performance ratio, Hy3 preview is tough to dismiss: the agent benchmark composite exhibits it within the “optimum zone” with ~295 billion parameters, forward of DeepSeek-V3.2 (600 billion+) and matching Kimi-K2.5 (over 1 trillion parameters) at a fraction of the compute value.

Hunyuan fashions have already been deployed throughout Yuanbao, CodeBuddy, WorkBuddy, QQ, and Tencent Docs. On CodeBuddy and WorkBuddy, first-token latency dropped 54%, end-to-end era time fell 47%, and the mannequin efficiently ran agent workflows so long as 495 steps. Tencent Cloud is providing API entry at roughly $0.18 per million enter tokens and $0.59 per million output tokens, with private Token Plan packages beginning at round $4.10 monthly.

Each day Debrief E-newsletter

Begin day by day with the highest information tales proper now, plus unique options, a podcast, movies and extra.