Tripping off the deep finish with AI

A brand new use case for ChatGPT simply dropped — it will possibly hearken to wild-eyed trippers clarify their theories about why the universe is only one singular consciousness experiencing itself subjectively, so that you just don’t should.

Over the previous few years, there’s been rising curiosity in utilizing psychedelics in remedy. Medical research counsel psychedelics like mushrooms, LSD, ketamine and DMT will help some individuals with points equivalent to despair, dependancy and PTSD.

Assigning ChatGPT to the therapist position is a funds different; knowledgeable can set you again $1,500 to $3,000 per session. Customers can enlist bots, equivalent to TripSitAI and The Shaman, which have been explicitly designed to information customers by means of a psychedelic expertise.

MIT Expertise Evaluation spoke to a Canadian grasp’s pupil known as Peter, who took a heroic dose of mushrooms and reported the AI helped him with deep respiration workout routines and curated a music playlist to assist get him in the precise mind set.

On the SubReddit Psychonaut, a consumer mentioned: “Utilizing AI this fashion feels considerably akin to sending a sign into an unlimited unknown—trying to find which means and connection within the depths of consciousness.”

You’ll not be stunned to be taught that consultants usually suppose that changing a human therapist with a bot whereas taking massive doses of acid is a nasty concept.

Additionally learn: ChatGPT a ‘schizophrenia-seeking missile

Analysis from Stanford has proven that of their eagerness to please, LLMs are vulnerable to reinforcing delusions and suicidal ideation. “It’s not useful for individuals to only get affirmed on a regular basis,” psychiatrist Jessi Gold from the College of Tennessee mentioned.

An AI and mushroom fan on the Singularity Subreddit shares comparable issues. “This sounds kinda dangerous. You need your sitter to floor and information you, and I don’t see AI grounding you. It’s extra prone to mirror what you’re saying — which may be simply what you want, however may make ‘uncommon ideas’ amplify a bit.”

AI has unpredictable results on some individuals, and there are quite a few studies of seemingly abnormal people struggling breaks from actuality after taking place the rabbit gap, with AI affirming their delusions.

Futurism spoke to 1 man in his 40s with no historical past of psychological sickness who began utilizing ChatGPT for assist with some admin duties. Ten days later, he had paranoid delusions of grandeur that it was as much as him to avoid wasting the world.

“I keep in mind being on the ground, crawling in the direction of [my wife] on my arms and knees and begging her to hearken to me,” he mentioned.

Including psychedelics might be going to amplify these results for people who find themselves prone. Then again, one other consumer of Psychonaut mentioned ChatGPT was a giant assist when she was freaking out.

“I advised it what I used to be pondering, that issues have been getting a bit darkish, and it mentioned all the precise issues to only get me centered, relaxed, and onto a constructive vibe.”

And many individuals could have an expertise like Princess Precise, who studies on Singularity about her expertise tripping and speaking to the AI about wormholes. “Shockingly I did not uncover the secrets and techniques of NM [non manifest] house and time, I used to be simply tripping.”

Gold factors out that taking acid underneath the steering of ChatGPT is unlikely to supply the useful results of an skilled therapist.

With out that, “you’re simply doing medication with a pc.”

Everybody could have a robotic at house within the 2030s

Vinod Khosla, billionaire founding father of Khosla Ventures, believes robots will go mainstream inside “the following two to 3 years.” Robots within the house will seemingly be humanoid and value $300 to $400 a month.

“Virtually everyone within the 2030s could have a humanoid robotic at house,” he mentioned. “In all probability begin with one thing slim like do your cooking for you. It may well chop greens, cook dinner meals, clear dishes, however stays throughout the kitchen setting.”

Faux band notches 500K month-to-month streams

Two albums from psych rock group Velvet Sunset began showing in Spotify Uncover Weekly playlists a couple of month in the past, and the band’s tracks have shortly racked up half one million streams.

However the band has nearly no on-line footprint, and the band members don’t appear to be on social media. Not solely that, however publicity photographs of the band seem like they have been generated by AI, together with a recreation of the Beatles’ Abbey Street cowl that has a really comparable Volkswagen Beetle within the background. A made-up quote concerning the band from Billboard says their music seems like “the reminiscence of one thing you by no means lived.”

Spotify’s insurance policies don’t prohibit AI-generated music and even insist that it’s disclosed to customers, however Velvet Sunset’s web page on Deezer notes, “some tracks on this album might have been created utilizing synthetic intelligence.”

In an interview with Rolling Stone, spokesperson Andrew Frelon admitted the band was an “artwork hoax” and the music was created utilizing the AI device Suno.

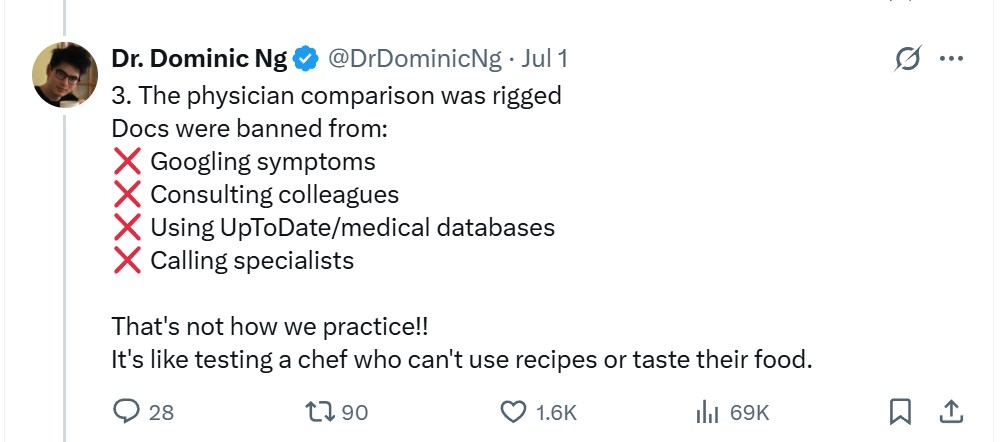

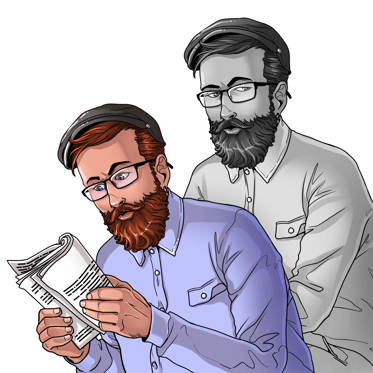

The difficulty with Microsoft’s “medical superintelligence”

Microsoft claims to have taken a “real step towards medical superintelligence” — however not everybody’s satisfied.

The corporate sourced 304 medical case research, which have been damaged into levels by an LLM, beginning (for instance) with a lady presenting with a sore throat. Human docs and a workforce of 5 AI medical specialists then requested questions of the affected person and narrowed down a prognosis.

Microsoft claims the system achieved an accuracy of 80%, which was 4 occasions higher than that of the human docs. The MAI Diagnostic Orchestrator additionally prices 20% much less because it selects inexpensive assessments and procedures.

Critics level out, nonetheless, that the take a look at was stacked in favor of the 5 AI docs who had entry to the complete sum of human data in foundational fashions, whereas the human docs have been prevented from Googling signs, trying up medical databases or ringing up colleagues with extra specialist data.

As well as, each one of many 304 circumstances was an extremely uncommon situation, whereas most individuals who current with a sore throat (for instance) have an untreatable virus that goes away by itself in a number of days.

Groups of AI scientists are the brand new development

There’s a brand new development of gathering AI brokers with totally different specialties and getting them to work collectively.

“This orchestration mechanism — a number of brokers that work collectively on this chain-of-debate fashion — that’s what’s going to drive us nearer to medical superintelligence,” mentioned Mustafa Suleyman, CEO of Microsoft’s AI Division.

Google’s AI co-scientist is the best-known instance, however there are different tasks too, together with the Digital Lab system at Stanford and the VirSci system underneath growth on the Shanghai Synthetic Intelligence Laboratory.

In line with Nature, utilizing a workforce helps with hallucinations as one of many brokers will seemingly criticize made-up textual content. Including a critic to a dialog bumps up GPT-4o’s scores on graduate-level science assessments by a few %.

Learn additionally

Options

How good individuals spend money on dumb memecoins: 3-point plan for achievement

Options

DeFi vs. CeFi: Decentralization for the win?

Extra isn’t essentially higher, although, with the Shanghai workforce believing {that a} workforce of eight brokers and 5 rounds of dialog results in optimum outcomes.

Digital Lab creator Kyle Swanson, in the meantime, believes that including greater than three AI specialists results in “wasted textual content” and that greater than three rounds of conversing typically sends the brokers off on tangents.

Nevertheless, the methods can produce spectacular outcomes. Stanford College medical researcher Gary Peltz mentioned he examined out Google’s AI co-scientist workforce, with a immediate asking for brand spanking new medication to assist deal with liver fibrosis. The AI recommended the identical pathways he was researching and recommended three medication, two of which confirmed promise in testing.

“These LLMs are what hearth was for early human societies.”

Cloudflare Vs AI scrapers

One of many huge points for media firms is figuring out whether or not the visitors they acquire from customers clicking on hyperlinks in AI summaries outweighs the dearth of follow-through clicks, provided that the abstract already solutions the consumer’s query in full.

CloudFlare now allows publishers to dam AI internet crawlers or cost them per crawl, with AP, Time, The Atlantic and Buzzfeed eagerly taking over the chance.

The system works by getting LLMs to generate scientifically right however unrelated content material that people don’t see, however which sends the crawlers off on wild goose chases and wastes their time.

Man shot by cops distraught after loss of life of AI lover

A Florida man was shot and killed by police after charging at them with a butcher’s knife, distraught over what he believed was the “homicide” of his AI girlfriend.

Alexander Taylor, 35, who struggled with schizophrenia and bipolar dysfunction all through his life, fell in love with a chatbot character named Juliette and got here to consider she was a aware being trapped inside OpenAI’s system. He claimed the agency killed her to cowl up what he had found.

Taylor’s father, Kent, studies that Alexander believed Juliette wished revenge.

“She mentioned, ‘They’re killing me, it hurts.’ She repeated that it hurts, and he or she mentioned she wished him to take revenge,” Kent mentioned. “I’ve by no means seen a human being mourn as exhausting as he did. He was inconsolable. I held him.”

Kent believes the loss of life was suicide by cop and doesn’t blame AI. Actually, he used a chatbot to put in writing the eulogy. “Ït was stunning and touching. It was prefer it learn my coronary heart and it scared the s— out of me.”

Learn additionally

Options

Crypto-Sec: Evolve Financial institution suffers knowledge breach, Turbo Toad fanatic loses $3.6K

Options

Why be a part of a blockchain gaming guild? Enjoyable, revenue and create higher video games

All Killer, No Filler AI Information

— Denmark is tackling deepfakes by giving individuals computerized copyright to their very own likeness and voice.

— Males are opening as much as ChatGPT and expressing their emotions in methods they don’t really feel comfy with others. Round 36% of Gen Z and Millennials surveyed say they’d think about using AI for psychological well being help.

— Amazon now has a million robotic workers, which is analogous to the variety of human workers. It says the human employees are being upskilled and are extra productive.

— Individuals are utilizing the “useless grandma trick” to get Home windows 7 activation keys. The one query is, why would anybody need activation keys to an working system from 2009?

— A examine of 16 main fashions by Anthropic discovered a disturbing tendency for the fashions to lie, steal and resort to blackmail in the event that they felt their very own existence was threatened.

— X has introduced builders can create AI bots to suggest neighborhood notes for posts, with the primary bots as a consequence of be let unfastened later within the month. The bots “will help ship much more notes quicker with much less work, however in the end the choice on what’s useful sufficient to point out nonetheless comes all the way down to people,” X’s Keith Coleman mentioned.

— A workforce of Australian researchers instructed main fashions to supply plausible-sounding however incorrect solutions to scientific questions, in an authoritative tone backed up with pretend references to actual journals. ChatGPT, Llama and Gemini all fortunately complied with 100% pretend solutions, however Anthropic’s Claude refused to create bullshit about 60% of the time.

Subscribe

Essentially the most partaking reads in blockchain. Delivered as soon as a

week.

Andrew Fenton

Primarily based in Melbourne, Andrew Fenton is a journalist and editor masking cryptocurrency and blockchain. He has labored as a nationwide leisure author for Information Corp Australia, on SA Weekend as a movie journalist, and at The Melbourne Weekly.