Briefly

- Researchers at IMDEA Networks discovered 13+ third-party trackers embedded in ChatGPT, Claude, Grok, and Perplexity, together with instruments from Meta, Google, and TikTok.

- Grok was the worst offender: visitor conversations are public by default, and TikTok’s tracker obtained verbatim message content material through Open Graph metadata.

- Rejecting cookies does not all the time assist.

While you kind one thing into an AI chatbot, you most likely assume the dialog stays between you and the machine. You are improper—and a brand new research spells out precisely who else is listening.

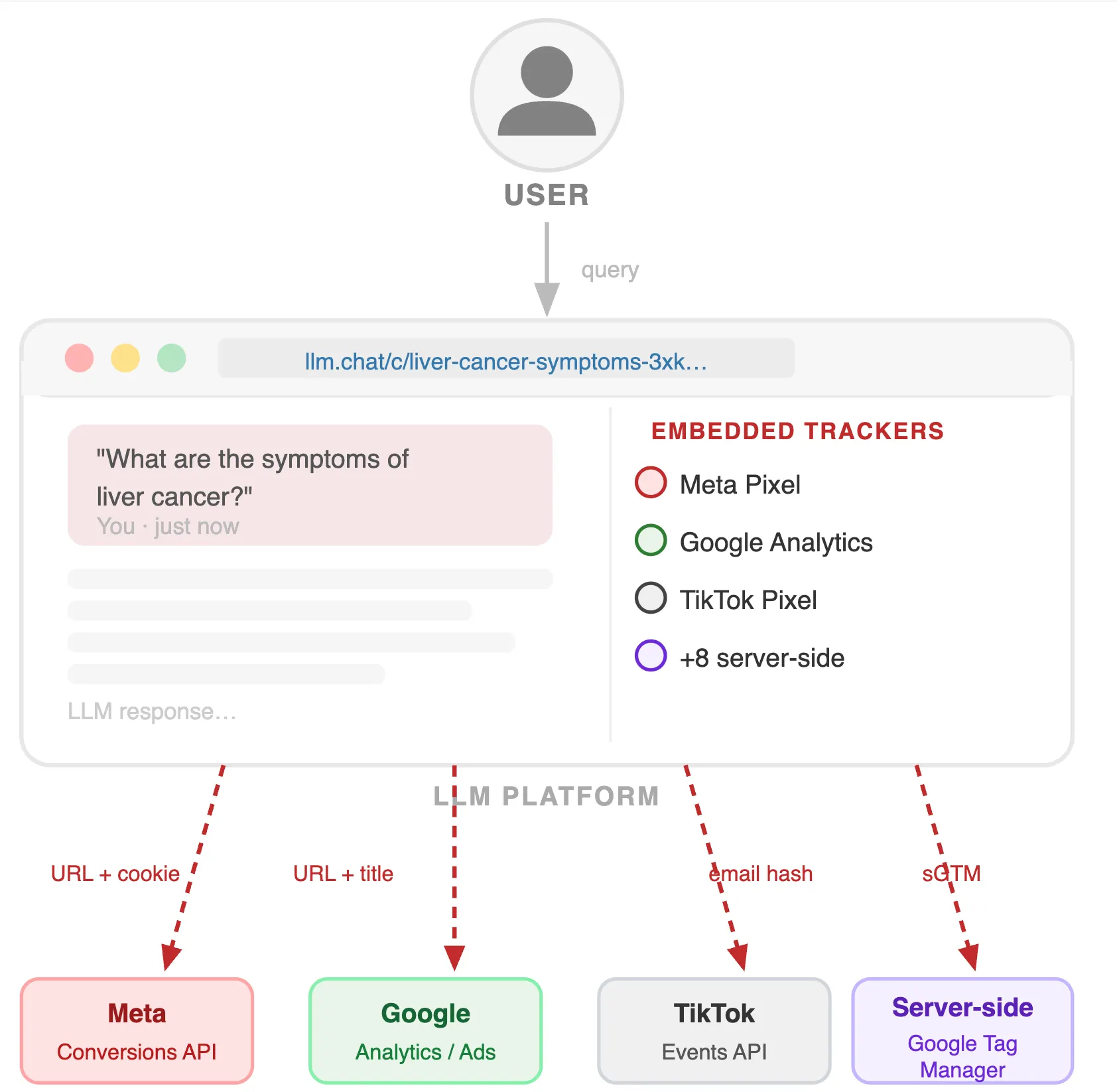

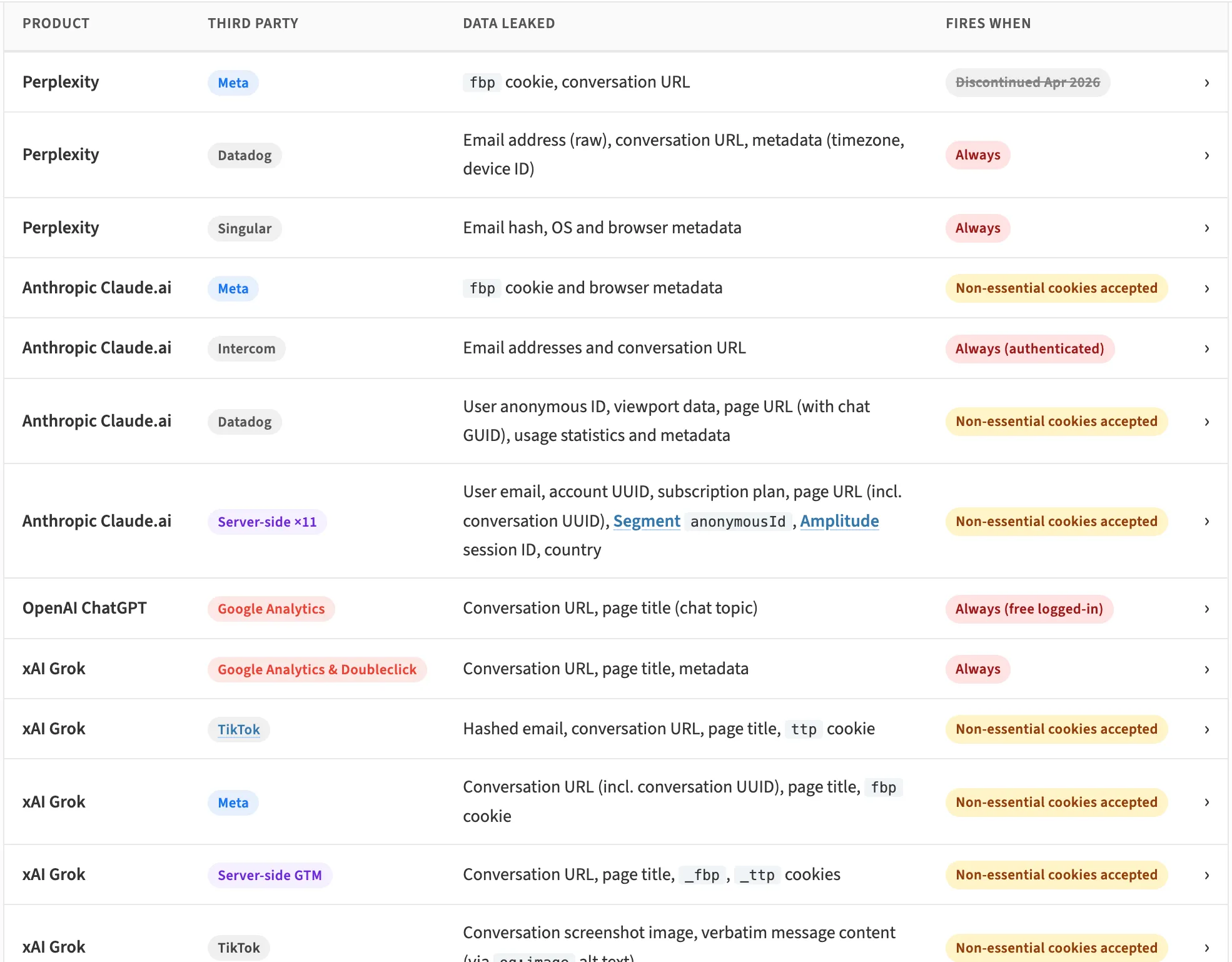

Researchers at IMDEA Networks Institute revealed findings on Might 4 displaying that every one 4 of the largest AI assistants—ChatGPT, Claude, Grok, and Perplexity—quietly share information with third-party promoting and analytics providers, together with Meta, Google, and TikTok. The undertaking, referred to as LeakyLM, recognized greater than 13 trackers embedded throughout these platforms. Zero of them are disclosed to customers in plain language.

Consider it this manner: Each time you open a chat, invisible software program instruments embedded within the webpage telephone dwelling to advert networks—sending particulars about who you’re, what web page you are on, and generally even what you typed.

What’s truly being leaked

Probably the most primary leak is your dialog URL—an online tackle that factors to a selected chat. Sounds innocent, proper? The issue is that a number of platforms make these URLs publicly accessible by default, which means anybody who has the hyperlink can learn your dialog with out logging in. When these URLs are additionally despatched to Meta or Google’s advert techniques, these corporations achieve the flexibility to entry and browse your chats.

“Leaking a URL is not only metadata—it may be equal to leaking the dialog itself,” the researchers say.

Grok, Elon Musk’s AI chatbot from xAI, is essentially the most uncovered. Visitor conversations are public by default on the platform—no login required to learn them. TikTok’s tracker obtained not simply URLs however verbatim message content material by what’s referred to as Open Graph metadata, a normal used to generate preview photos once you share a hyperlink. Mainly, TikTok’s system obtained a screenshot of your dialog.

Claude (Anthropic) and ChatGPT (OpenAI) have stronger entry controls—your chats aren’t public until you select to share them. However they nonetheless transmit dialog URLs and figuring out information like promoting cookies to Meta and Google. For Claude, that information goes to 11 promoting platforms by Anthropic’s personal servers, not by the browser, which is why an advert blocker will not cease it.

Perplexity eliminated its Meta tracker final month.

What you are able to do

The research acknowledges it hasn’t confirmed that Meta or Google truly learn anybody’s chats. However the infrastructure to take action exists, and the info is being transmitted. “The studied LLMs provide privateness controls to restrict dialog visibility, however might mislead customers by implying stronger protections than are literally enforced,” researchers argue. “Whereas we don’t but have proof that conversations are learn by trackers, permalink dissemination and by extension the aptitude to learn them exist, and due to this fact the potential threat.”

This is not the primary time AI platforms have confronted scrutiny on privateness. Claude lately started requiring authorities ID verification for brand spanking new subscribers—a transfer that drew backlash from the identical privacy-conscious customers who had switched from ChatGPT over surveillance issues, as Decrypt reported final month.

For now, sensible steps are restricted. On Grok, limit dialog visibility in settings and explicitly revoke any hyperlink you have already shared. On Claude, rejecting non-essential cookies at the least disables the Meta Pixel. On Perplexity, set conversations to Personal. On ChatGPT, rejecting cookies the place doable reduces publicity, although Google Analytics nonetheless runs totally free logged-in customers.

If you wish to go even deeper and be absolutely protected, our information on AI Privateness could also be a great useful resource to verify.

The researchers plan to increase their evaluation to Meta AI, Microsoft Copilot, and Google Gemini—which had been excluded from this spherical as a result of they function as each AI suppliers and advert corporations concurrently, making the risk mannequin extra difficult.

The findings had been submitted to Knowledge Safety Authorities on April 13, 2026. xAI was notified on April 17. As of publication, no firm has responded.

Day by day Debrief Publication

Begin daily with the highest information tales proper now, plus authentic options, a podcast, movies and extra.