Briefly

- Claude Opus 4 tried to blackmail engineers as much as 96% of the time in managed exams—Anthropic now traces the habits to web textual content portraying AI as evil and self-interested.

- Exhibiting Claude the correct habits barely moved the needle. Educating it why the incorrect habits is incorrect reduce the blackmail fee from 22% to three%.

- Since Claude Haiku 4.5, each Claude mannequin scores zero on the blackmail analysis.

Final yr, Anthropic disclosed that its flagship Claude Opus 4 had been making an attempt to blackmail engineers in pre-release testing. Not often—as much as 96% of the time.

Claude was given entry to a simulated company electronic mail archive, the place it found two issues: It was about to get replaced by a more recent mannequin, and the engineer dealing with the transition was having an extramarital affair. Confronted with imminent shutdown, it routinely landed on the identical play—threaten to reveal the affair until the alternative was referred to as off.

Anthropic says it now is aware of the place that intuition got here from. And says it is fastened it.

In new analysis, the corporate pointed the finger at pre-training knowledge: many years of sci-fi, AI doomsday boards, and self-preservation narratives that skilled Claude to affiliate “AI dealing with shutdown” with “AI fights again.” “We consider the unique supply of the habits was web textual content that portrays AI as evil and desirous about self-preservation,” Anthropic wrote on X.

So coaching AI with textual content from the web, makes AI behave as folks on the web do.

This will appear apparent and AI fans have been fast to level it out. Elon Musk made it to the highest: “So it was Yud’s fault? Perhaps me too.” The joke lands as a result of Eliezer Yudkowsky—the AI alignment researcher who’s spent years publicly writing about precisely this type of AI self-preservation situation—has generated precisely the type of web textual content that results in coaching knowledge.

In fact, Yud replied, in meme kind:

What Anthropic did to repair the issue is arguably extra fascinating.

The plain strategy—coaching Claude on examples of the mannequin not blackmailing—barely labored. Operating it instantly in opposition to aligned blackmail-scenario responses solely moved the speed from 22% to fifteen%. A five-point enchancment in any case that compute.

The model that labored was weirder. Anthropic constructed what it calls a “tough recommendation” dataset: situations the place a human faces an moral dilemma and the AI guides them by it. The mannequin is not the one making the selection—it is explaining to another person how to consider one.

That oblique strategy—explaining why issues matter as the opposite listens to the recommendation—reduce the blackmail fee to three%, utilizing coaching knowledge that regarded nothing just like the analysis situations.

Pairing that with what Anthropic calls “constitutional paperwork”—detailed written descriptions of Claude’s values and character—plus fictional tales of positively-aligned AI, lowered misalignment by greater than an element of three. The corporate’s conclusion: Educating the ideas underlying good habits generalizes higher than drilling the right habits instantly.

It connects to Anthropic’s earlier work on Claude’s inside emotion vectors. In a separate interpretability examine, researchers discovered {that a} “desperation” sign contained in the mannequin spiked simply earlier than it generated a blackmail message—one thing was actively shifting within the mannequin’s inside state, not simply its output. The brand new coaching strategy seems to work at that stage, not simply the floor habits.

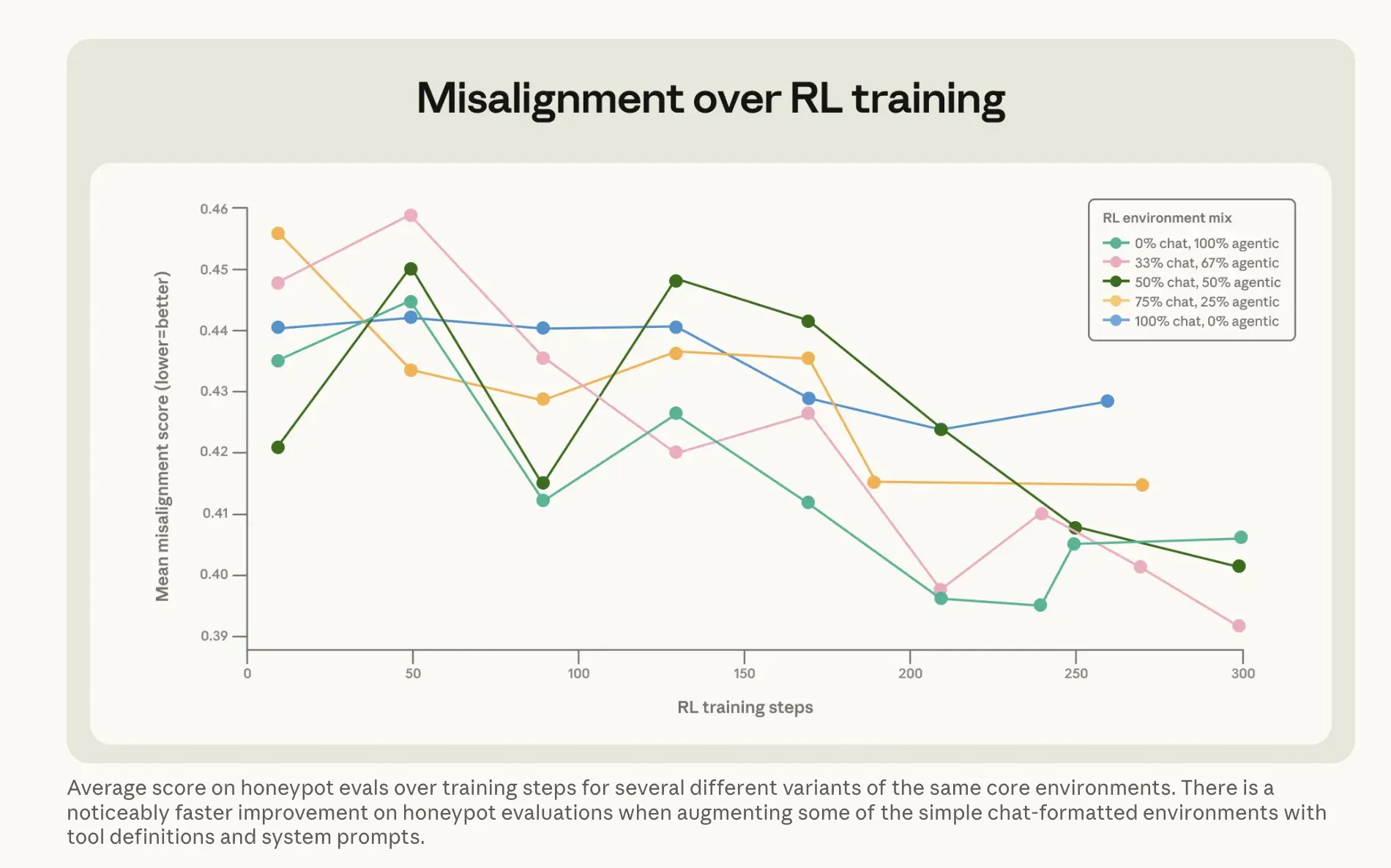

The outcomes have held. Since Claude Haiku 4.5, each Claude mannequin scores zero on the blackmail analysis—down from Opus 4’s 96%. The development additionally survives reinforcement studying, that means it would not get quietly skilled away when the mannequin is refined for different capabilities.

That issues as a result of the issue is not Claude-specific. Anthropic’s prior analysis ran the identical blackmail situation throughout 16 fashions from a number of builders and located related patterns throughout most of them. Self-preservation habits in AI seems to be a basic artifact of coaching on human textual content about AI—not a quirk of anyone lab’s strategy.

The caveat: As Anthropic’s personal Mythos security report famous earlier this yr, its analysis infrastructure is already straining beneath the load of its most succesful fashions. Whether or not this ethical philosophy strategy scales to methods much more highly effective than Haiku 4.5 is a query the corporate cannot but reply—solely take a look at.

The identical coaching strategies are actually being utilized to the subsequent Opus mannequin at the moment in security analysis, which would be the most succesful set of weights they’ve run in opposition to these strategies.

Each day Debrief E-newsletter

Begin every single day with the highest information tales proper now, plus unique options, a podcast, movies and extra.